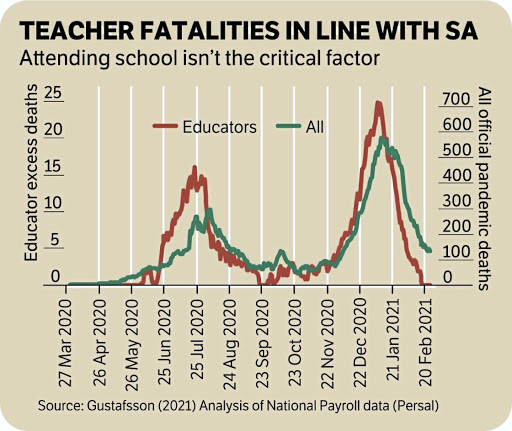

South Africa is currently in a race against time. As COVID waves come and go, and new variants emerge it is now more clear than ever that there is only one route out of the mess we find ourselves in and that is vaccination. On that front there is both good news and bad.

The good news is that both of South Africa’s vaccines – Pfizer and Johnsson & Johnsson (J&J) – seem to offer strong protection against the Delta variant driving the third wave. So if you’ve been vaccinated and developed an immune response (typically 2-4 weeks after vaccination) you won’t get severely ill from COVID. The other good news is that vaccine acceptance is increasing over time. In an earlier round of the nationally representative National Income Dynamics Study Coronavirus Rapid Mobile Survey (Nids-Cram) we reported that in February this year 71% of South Africans agreed to get vaccinated. In our latest results launched today, this has increased to 76% in April/May 2021.

The bad news is that the Delta variant is twice as transmissible as the original COVID virus, and hospitals are overwhelmed. Private sector hospital admissions in Gauteng, the Free State and the Northern Cape are exceeding the peaks experienced in the second wave. Gauteng alone had more than 4000 hospital admissions in the last week of June and as of 2 July was at 91% hospital capacity (public and private).

Unfortunately, at the end of June only 5% of the population had been vaccinated with at least one dose of a vaccine, well behind countries like Pakistan (6%), Botswana (7%), India (20%), and Brazil (35%), and obviously the US (55%) and the UK (67%). In fact as of the 1st of July South Africa ranked 126th in the world with the same vaccination rate as Libya (5.6%) and Venezuela (5,1%) – both essentially failed states.

Why is this? Originally we were told that supply was the main constraint. Yet we now have 7,4-million doses of the vaccine in our borders and have had more supply than we’ve been able to administer since May. To date only 3-million people in South Africa have been vaccinated.

We were also told that we didn’t have enough money. Yet in February this year the Finance Minister announced that there was now “total potential funding for the vaccination programme to about R19-billion“ made up of R6.5-billion to procure and distribute vaccines, R2,4-billion for provincial health departments to administer the vaccines and a contingency reserve of R9-billion “given uncertainty around final costs.” Why is it then that five months later we only vaccinate on weekdays and not on weekends? Department of Health Spokesperson Dr Lwazi Manzi explained: “Basically, the provinces indicated they don’t have the budget to be able to pay the overtime over weekends.” And according to the Western Cape Treasury’s estimates that’s correct. They find that ‘operational costs’ amount to R108 per vaccine dose administered (p.102) including contract staff, hiring more nurses, overtime etc. If that figure is correct it will cost provinces R5,8-billion to administer 54-million doses (the number that’s needed to reach 40-million people since Pfizer requires 2-doses. That means that the contingency reserve is necessary and – at least at the time of writing – it had not been released to provinces, despite being technically “available.”

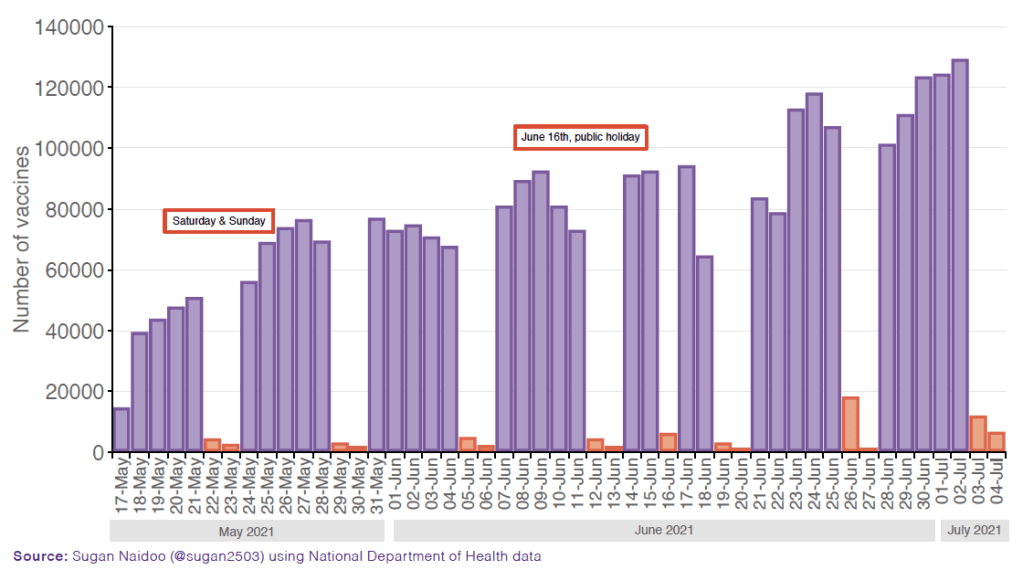

So practically speaking, what does this look like? The graph below shows the daily vaccination numbers from 17 May until 4 July 2021. It shows how vaccinations virtually disappear on Saturday and Sunday each week, as well as on June 16th, a public holiday.

Figure 1: Vaccines per day (17 May to 4 July 2021)

Using the average vaccination rates of the Friday prior and the Monday after, I estimate that between 17 May and 5 July 1,3-million additional shots could have been administered if we vaccinated on Saturdays and Sundays as well as on June 16th. Put differently, we are 1,3-million jabs behind schedule because we aren’t vaccinating on weekends. What this comes back to is state capacity. The South African state does not currently employ people primarily on competence and as a result is not able to implement its own plans, let alone expedite them. In response to a Parliamentary question from the opposition earlier this year, Minister Senzo Mchunu reported that 35% of the public sector’s 9,500 most senior managers in the country (at national and provincial level) “do not have the required qualifications and credentials for the positions they currently occupy.”

Does this help to explain why eight weeks into the national vaccination program we are still unable to either source, unlock, transfer or distribute the funds needed to pay staff for overtime so that they can vaccinate on weekends? How is it that the National Treasury announced R19-billion in available funds in February but in July we are still told by the Department of Health that there is no money? It’s as if we are fighting a forest fire on weekdays and then we send the firefighters home for the weekend because we can’t pay them overtime for Saturday and Sunday, even while the fire rages on.

There is another question about whether nurses should be the only people allowed to administer vaccines. The process is relatively straightforward and given the limited number of nurses in the country and the need to vaccinate 40-million South Africans in a year, others should also be authorised to administer COVID-19 vaccines. This is exactly what the United States has done. In February this year they passed the “Sixth Amendment to Declaration Under the Public Readiness and Emergency Preparedness Act (PREP) for Medical Countermeasures Against COVID-19. This essentially limits the medical liability of military officers administering the vaccines. As the US Military explains: “The PREP Act allows the Department of Health and Human Services to issue a declaration to provide legal protections to certain military personnel involved in mass vaccination efforts.” As a result the US Army has now administered more than 1-million vaccine doses in America.

Why can’t some of South Africa’s 70,000 Community Health Workers administer vaccines under the supervision of nurses at big sites with clinical oversight? What is the point of issuing a Disaster Management Act (and perpetually extending it) if we don’t actually use it to implement drastic measures to avert the disaster? If the Health Professions Council of South Africa (HPCSA) is obstinate that only nurses can do it, then it should be summoned to Parliament to explain why this is not possible. Why can’t Parliament issue a similar liability waiver for trained Community Health Workers for the duration of the pandemic? After all, there have now been 3-billion doses of COVID-19 vaccines administered worldwide with no side effects in 99,99% of cases. This is now the most studied medical event in human history.

The government is already well behind it’s own vaccination plan having only vaccinated 60% of the target for the end of June (3 million of a forecasted 5-million people) and is an entire age-category behind schedule. Currently we are administering less than half (130,000) the number of daily doses required (250,000) to meet the target of 40-million vaccinations by February 2022.

The situation at hand also points to a lack of coherent leadership. The former President, under whose watch thousands of incompetent cadres were deployed to (and remain in) high office, is now en route to prison. Our Health Minister is on paid leave due to corruption and Deputy President David Mabuza is currently in Russia for a “medical consultation.” The Deputy President, who took “long leave” for the Russia trip, is also the Chairperson of the Inter-Ministerial Committee on Vaccines.

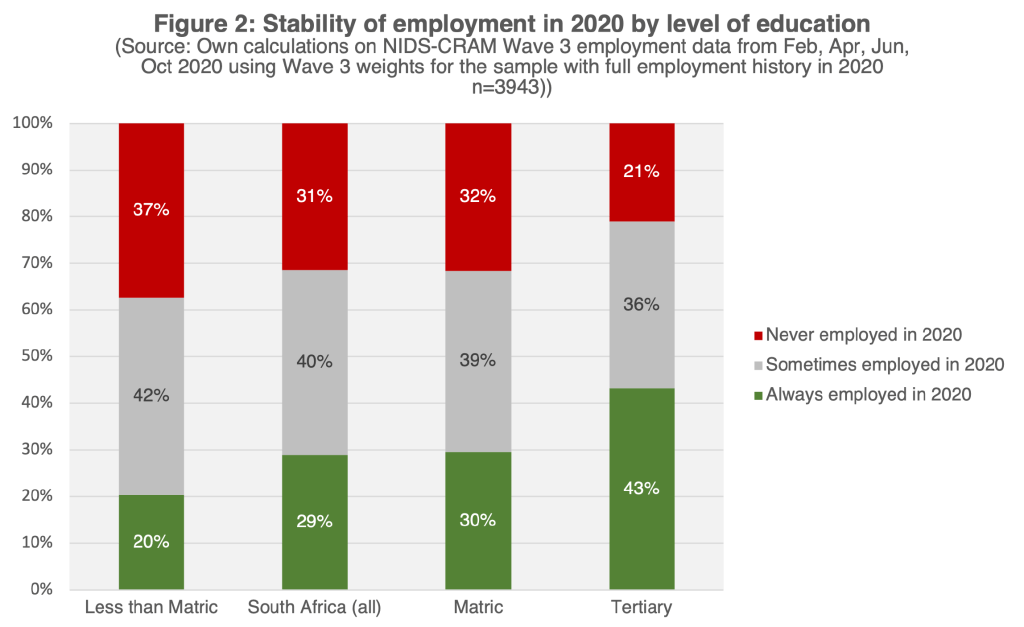

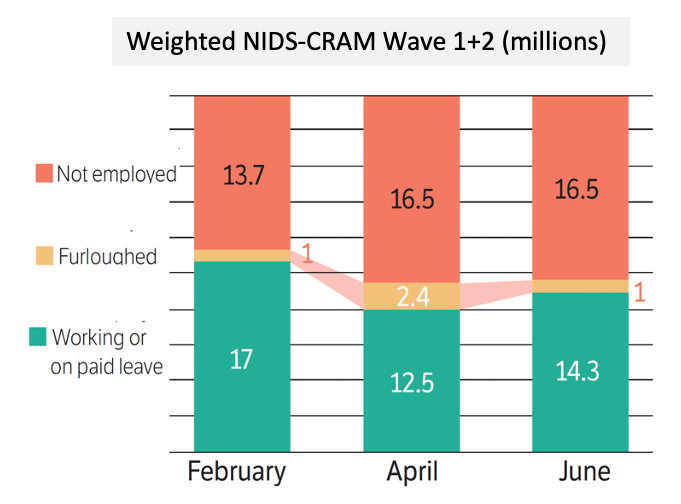

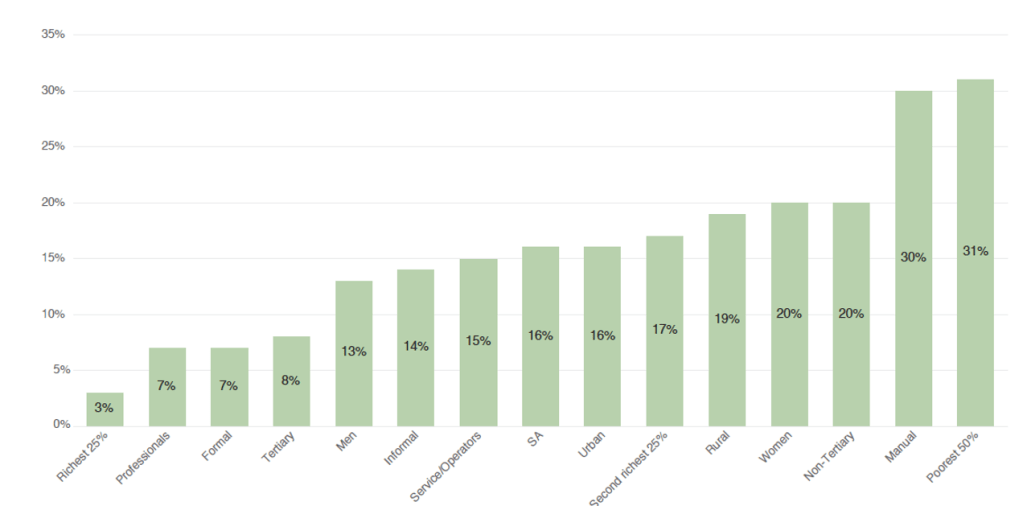

Why does all of this matter? It matters because the coronavirus pandemic is causing suffering on a scale that we have not seen before in South Africa. The latest Nids-Cram were released today (8 July) and paint a grim picture of the socioeconomic impact of the pandemic.

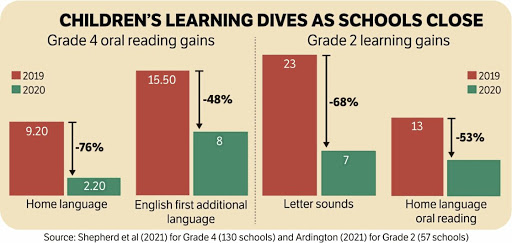

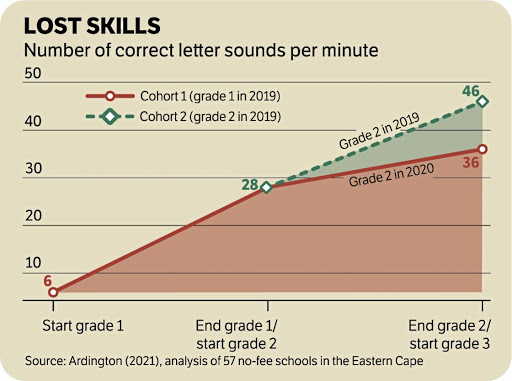

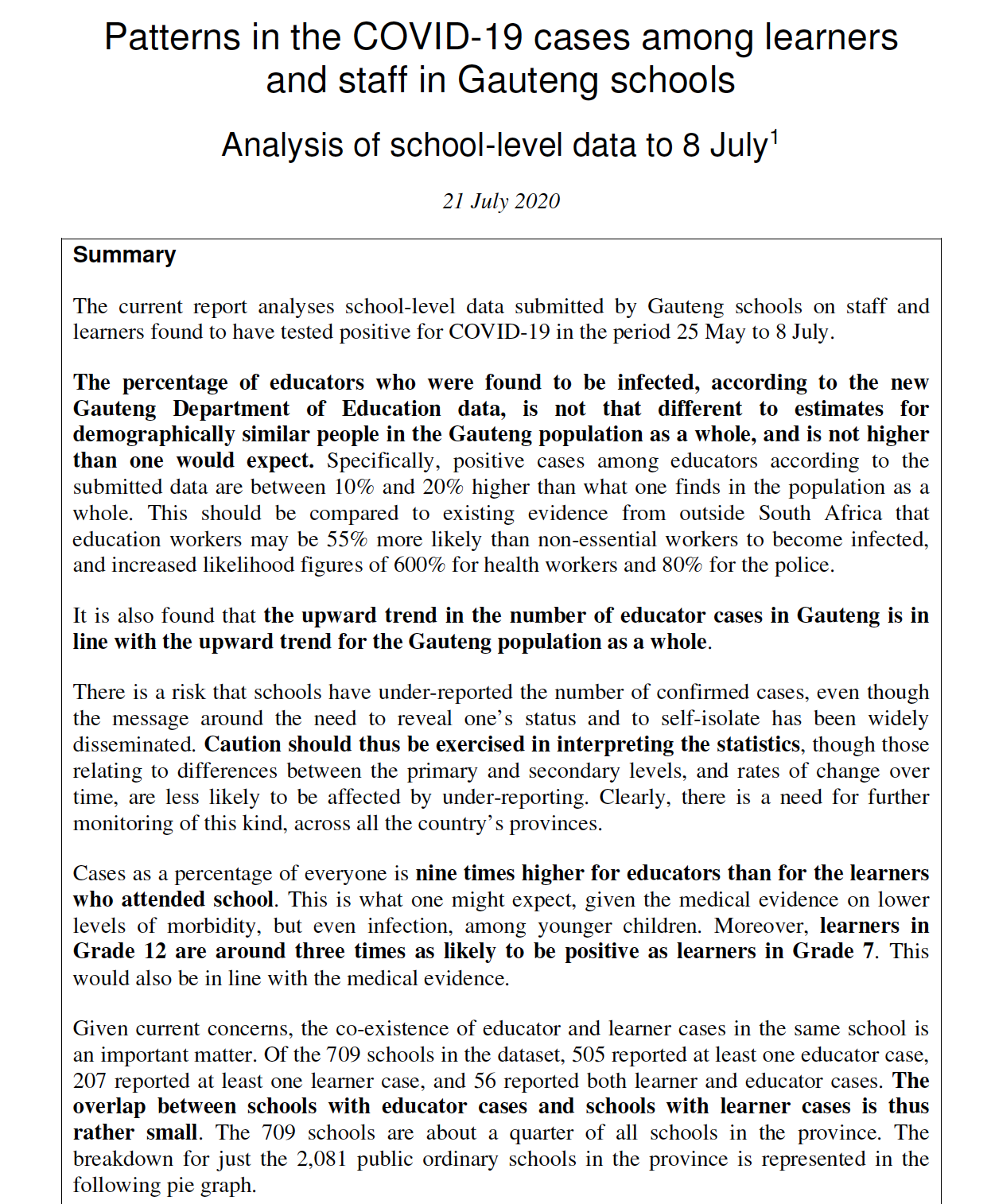

When asked in April and May this year, 10% of South African households with school-going children said that at least one child in their household had not returned to school since the beginning of 2021. That means that school dropout for those aged 7-17 years has now tripled from 230,000 pre-pandemic to 750,000 in April/May, i.e. an extra 500,000 children have dropped out of school during the pandemic. Whether this is temporary or permanent dropout is, as yet unknown, although previous research shows that the longer children remain out of school the higher the likelihood of permanent dropout.

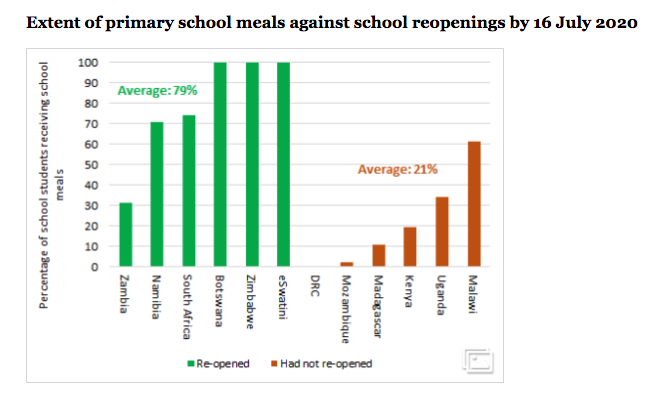

By June of this year the average primary school child has also lost 70-100% of a year of learning compared to previous cohorts. That is to say that the average Grade 3 child in June 2021 knows about as much as the average Grade 2 child in June of 2019. These sorts of losses will take more than a decade to recover. Ongoing rotational time-tables means that children’s access to free school meals is also compromised. While this has increased from 46% in November 2020 to 56% in April 2021, this is still below the pre-pandemic level of 65%. The Department’s own reporting to the High Court confirms this.

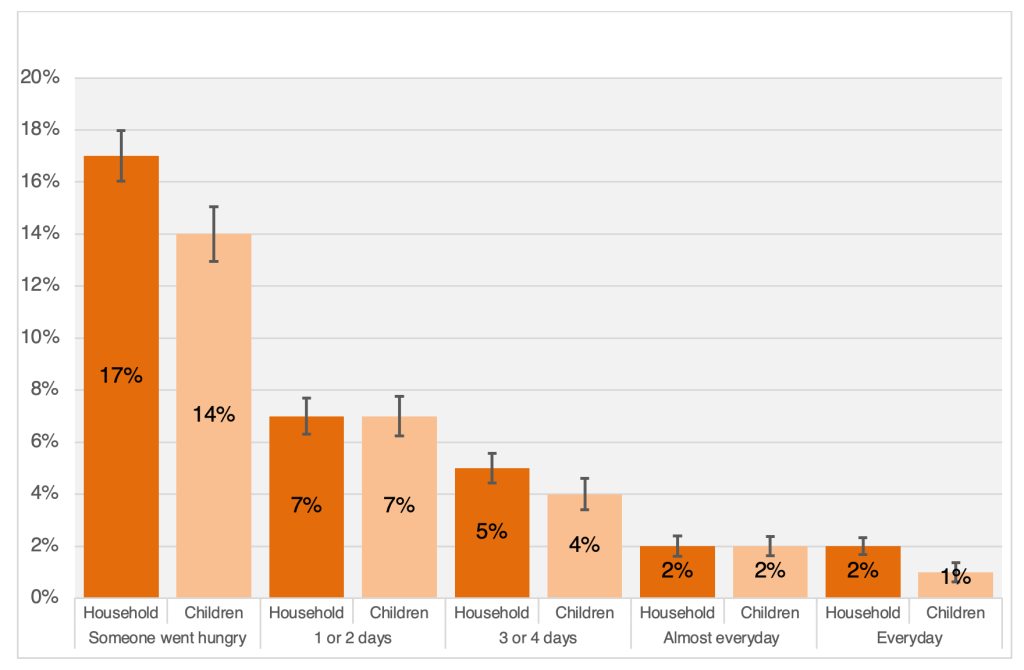

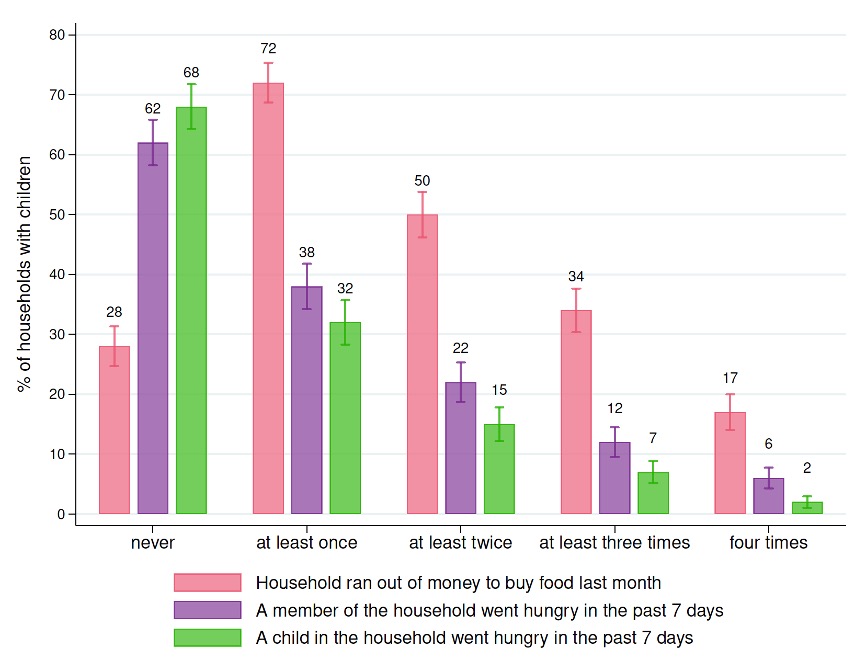

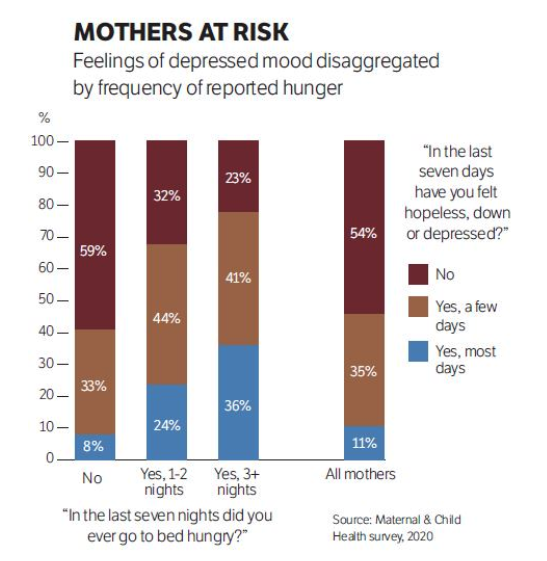

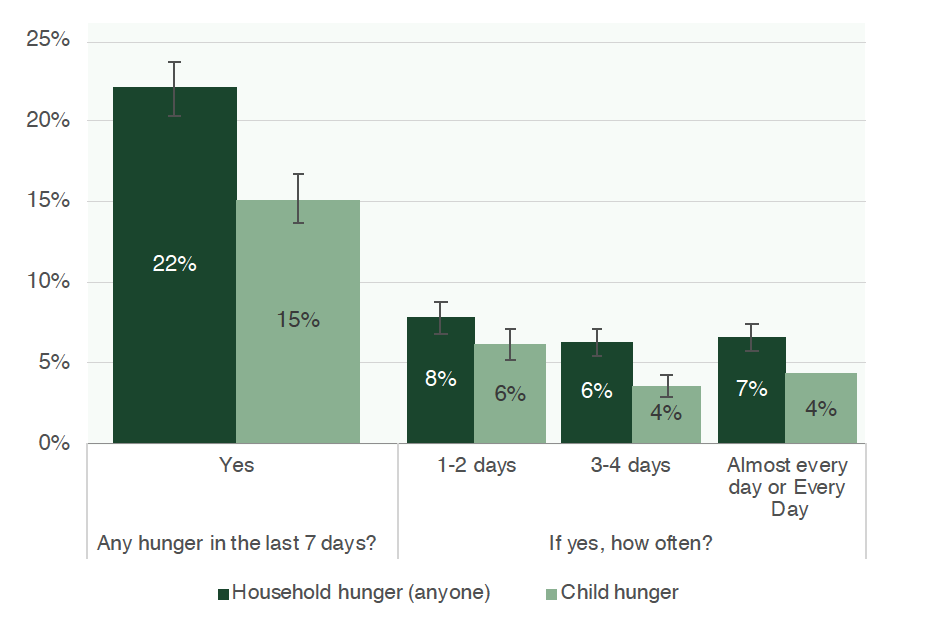

Throughout all the waves of NIDS-CRAM respondents were asked whether anyone in the household went hungry in the last seven days because there wasn’t enough money for food. If there was a child in the household another question was asked as to whether any child had gone hungry. Using the latest NIDS-CRAM data, Professor Martin Wittenberg and Dr Nicola Branson at UCT estimate that in April 2021 approximately 10-million people and 3-million children were in a household affected by hunger in the past seven days.

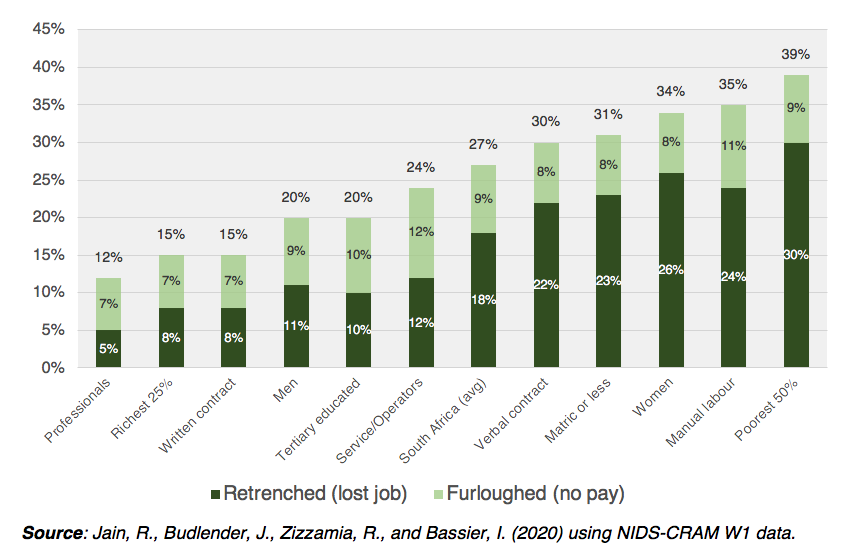

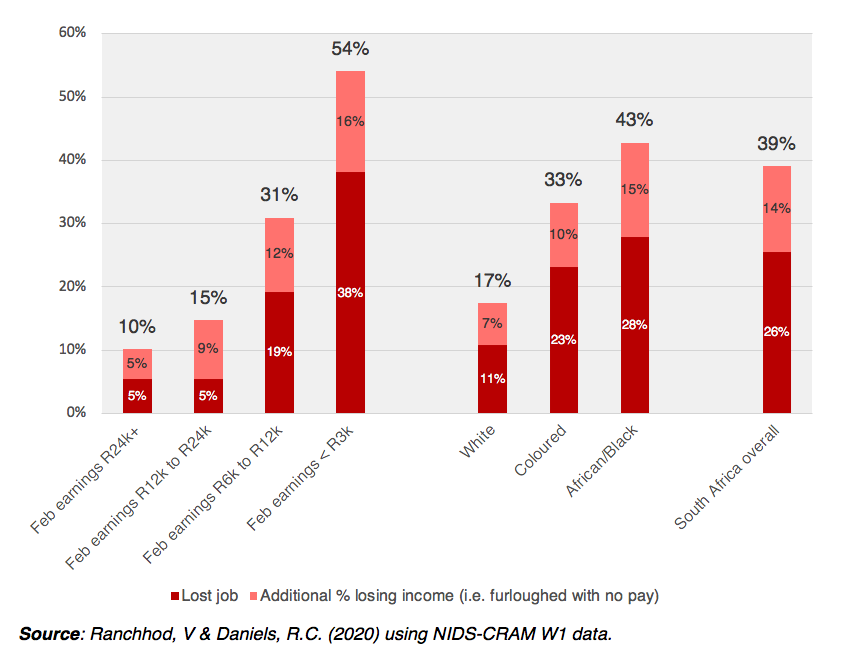

The study also revealed the ways that the pandemic has affected South Africans differently. Although by March 2021 men’s employment had largely recovered to pre-pandemic levels, women’s employment was still 8% lower than in February 2020. To add insult to injury, women have also not benefited from the two COVID-19 government relief grants (UIF-TERS and the R350 SRD grant) at the same rate as men, despite being worse affected by job losses. Women only account for 35-39% of the beneficiaries of these grants.

The latest set of results from NIDS-CRAM (Wave 5) is also the last round of data collection for this research project. The aim of NIDS-CRAM was to collect reliable data on a broadly nationally representative sample of South Africans to help policy-makers and the public make informed decisions in the immediate aftermath of the pandemic. It was always scheduled to be five waves, which are now complete. The NIDS-CRAM collaboration, made up of over 30 colleagues and researchers from six South African universities NIDS-CRAM has written 67 research papers over the last year, covering everything from hunger and employment to vaccine acceptance and mental health (all papers are available at cramsurvey.org and the data is available for download at DataFirst). It has been an incredibly enriching experience, working with such dedicated and collaborative academics united in our belief in the importance of evidence and social justice.

Yet it has also cemented in my mind the critically important role that civil society has to play in holding the line in this democratic experiment we call South Africa. In different ways and at different times civil society has stepped into the gap created by the government and held it to account. The investigative journalists at amaBungane and Scorpio were the ones who exposed the rot of State Capture and the looting of State Owned Enterprises like Transet and Eskom, estimated to be at least R50-billion. And for what? For fancy suits and shitty weddings in Dubai? There is a shamelessness about those who have been exposed. Who refuse to resign in the face of blatant evidence of their corruption and moral debasement. We need to stop calling it “stepping aside.” This is euphemistic at best. These people remain on full pay, perhaps all the way until they go to jail, and even then they may still get them. Just this month it was reported that former ANC councillor Sibongiseni Baba has been sentenced to 10 years in prison after raping a party volunteer – yet he is still paid his monthly salary, despite being behind bars. One wonders if Zuma will still get his R3-million tax-payer funded annual salary when he is in jail. Perhaps that is also for the judicial branch of government to decide. Like civil society the judiciary and independent institutions have held the line, largely driven by the moral fortitude of people like Thuli Madonsela and Justices Zondo and Khampepe. It’s worth dwelling briefly on the powerful role they have played in our current constitutional realignment.

The role of the judiciary

There is a deep sense of poetic justice at play in South Africa’s Constitutional Court at the moment. Ten years ago, Jacob Zuma appointed Mogoeng Mogoeng as the Chief Justice – a contentious appointment at the time. While most of his ten years have been less controversial than was expected, things went south at the end of last year when he opened in prayer at a public event: “If there be any vaccine that is of the devil, meant to infuse triple-six in the lives of the people, meant to corrupt their DNA, Lord God Almighty may it be destroyed by fire, in the name of Jesus.” Soon after this he announced that he would be going on “long leave” until October 2021 when his decade-long tenure was set to come to an end anyway.

In March 2021 President Ramaphosa appointed Sisi Khampepe as the Acting Chief Justice. Originally appointed by Mandela in 1995 as a TRC Commissioner she went on to become a justice of the Constitutional Court and earlier this year she was asked to act as Chief Justice. This was because the Deputy Chief Justice Raymond Zondo (who would normally take the role) had his hands full with the Zondo Commission.

In a 127-page judgment Justice Khampepe lambasted Zuma explaining that his conduct “smacks of malice”, that his accusations were “utterly bereft of supporting facts” and concluded that in the process of dismissing two summons’ from the Zondo Commission and then further dismissing the summons of the Constitutional Court compelling him to testify at the Zondo Commission that he had acted in an “indubitably vexatious and reprehensible manner” and was sentenced to jail for 15-months. Her exact judgment and wisdom are worth quoting verbatim:

“It would be nonsensical and counterproductive of this Court to grant an order with no teeth. Here, I repeat myself: court orders must be obeyed. If the impression were to be created that court orders are not binding, or can be flouted with impunity, the future of the Judiciary, and the rule of law, would indeed be bleak. I am simply unable to compel Mr Zuma’s compliance with this Court’s order, and am thus faced with little choice but to send a resounding message that such recalcitrance is unlawful and will be punished. I am mindful that, ‘having no constituency, no purse and no sword, the Judiciary must rely on moral authority’ to fulfil its functions” Acting Chief Justice Khampepe.

Indeed. The last three years have been a moral reckoning for South Africa. The judgment against Zuma has placed the Constitution front and centre in our national discourse showing that everyone is equal before the law, and even presidents can go to jail. Yet that same Constitution also outlines other rights and obligations that we can no longer ignore. As Justice Khampepe reminds us, there are things that tie us together as South Africans that are about more than flesh and blood, or race and class. It is an ideal of a multi-racial country where all have equal worth and where reconciliation is possible.

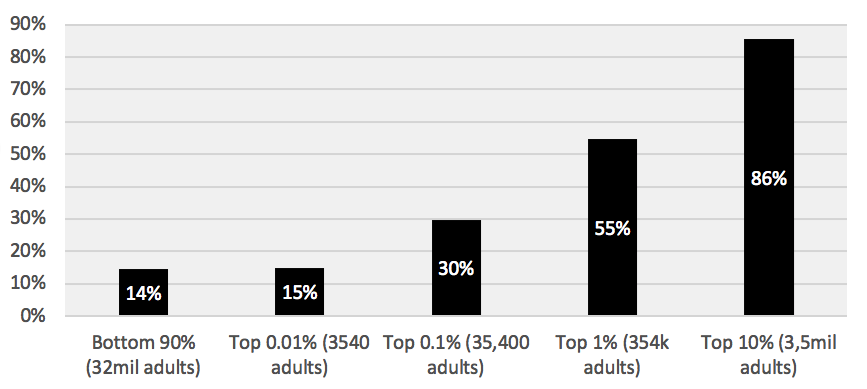

Yet reconciliation also means sharing wealth and protecting dignity. How can we claim with a straight face that we are all equal when 10-million South Africans and 3-million children experience hunger on a weekly basis? As rich South Africans we are failing our fellow citizens, a fact that is made even more difficult to swallow when one considers that it is we who have benefited the most post-apartheid. I bring this up here because almost everyone reading the Financial Mail is in the wealthiest 5% of South African society (i.e. earning more than R32,000 per month before tax and deductions). From research into the last two decades of tax data we know that the wealthiest 5% of South Africans have been the main beneficiaries of economic growth post-apartheid. While this group is now multiracial, it is also only this group where the big gains have been made.

Research by Aroop Chatterjee, Lĕo Czajka & Amory Gethin presented last month shows that in 1994 the average White South African earned seven times the average Black South African (7-to-1). This ratio has now come down to 4-to-1 in 2019. However, this was entirely driven by the rise in incomes of the richest 5% of Black South Africans. If you exclude that group, the ratio in 2019 is the same as the ratio in 1994. In a nutshell, racial income inequality in South Africa has come down since 1994, but only because of significant income growth among the richest 5% of Black South Africans, not improvements for the poorest 90%. Other research by Ihsaan Bassier and Ingrid Woolard shows that the trend continues right up to the top of the distribution. The real incomes of the wealthiest 1% of South Africa doubled between 2003 and 2016.

At its core this issue is one of moral conviction. In a middle-income country no one should go hungry. In her judgment Justice Khampepe reminds us that the country we aspire to be is founded on rights and obligations made explicit in our Constitution. But it is not only the right to equality before the law but also that “Everyone has inherent dignity and the right to have their dignity respected and protected…Everyone has the right to have access to sufficient food and water.” No one needs to tell us that it is morally unacceptable that 1-in-6 South Africans experience hunger on a weekly basis. It is a blight on our national conscience and one that we can (and should) do something about.

//

This article first appeared in the Financial Mail on the 8th of July 2021.

Nic Spaull is an Associate Professor of Economics at Stellenbosch University and the co-Principal Investigator of Nids-Cram.