(This article first appeared in the Sunday Times on the 14th of January 2018).

The real matric pass rate and the real site of failure in education

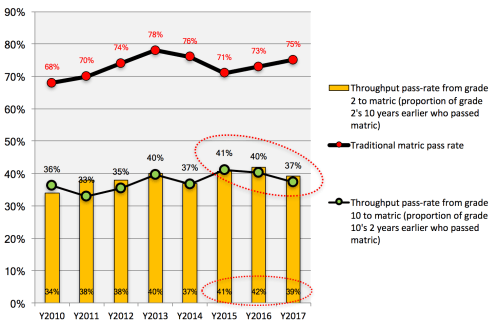

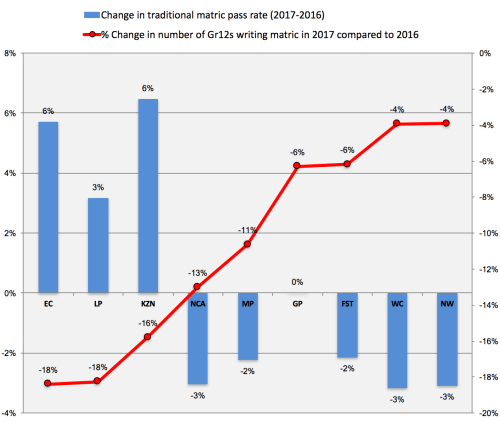

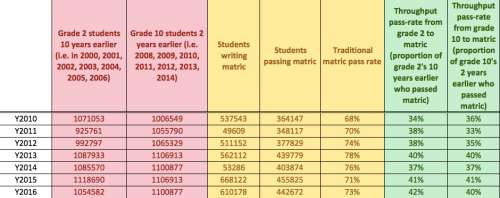

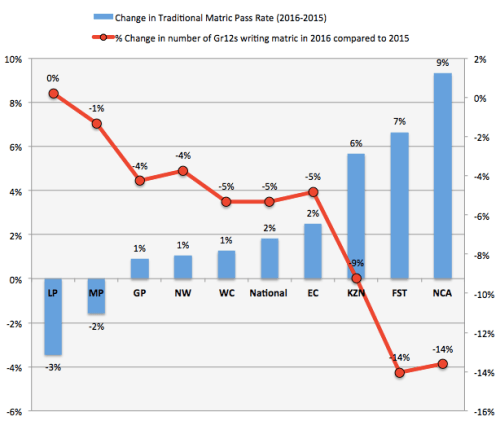

Every year the matric results come out and the whole country goes ballistic. Matric is on the front page of every newspaper and swamping the radio waves and TV stations of our country. And suddenly everyone has an opinion about what’s going on in education. “The Free State is the best province”, “We should ban the IEB and have one exam”, “The rising pass rate shows our interventions are working”. No. No. No. None of this is true. Firstly, the rising pass rate – from 73% in 2016 to 75% in 2017 is purely a function of more students being held back and dropping out. In 2017 there were only 401,435 passes compared to 442,672 in 2016 – that’s a 9% decline in one year. So why were there 40,000 fewer matric passes? It’s not because the population dropped by 9% in one year (it did go down but only by about 4%). It’s not because more candidates moved to writing their matric part-time (and therefore aren’t included in the ‘official’ pass rate); there were only 11,462 additional part-timers in 2017 compared to 2016. And it’s definitely not because there are fewer progressed learners (there were 108,742 in 2016 and 107,430 in 2017, basically the same). So we know it’s not the cohort, it’s not part-timers, and it’s not progressed learners, so what is it? Basically more kids are dropping out or being held back than in 2016. It’s also no surprise that the only provinces with increasing matric pass rates (Eastern Cape +6%, Limpopo +3% and KwaZulu-Natal +6%) were also the provinces with the largest declines in the numbers writing matric (-18%, -18% and -16% respectively). So the high-level take-home is that the more you ‘cull’ and ‘gate-keep’ the more your matric pass rate increases. It also means we have 5% fewer Mathematics and 10% fewer Physical Science passes in 2017 compared to 2016.

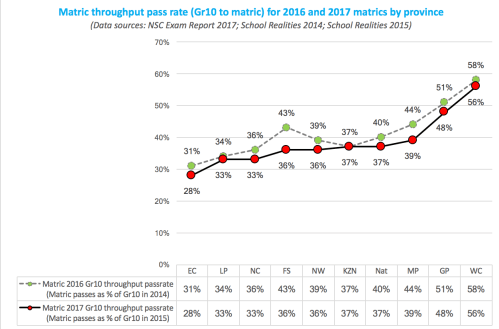

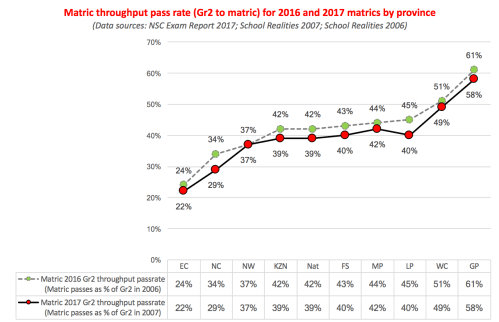

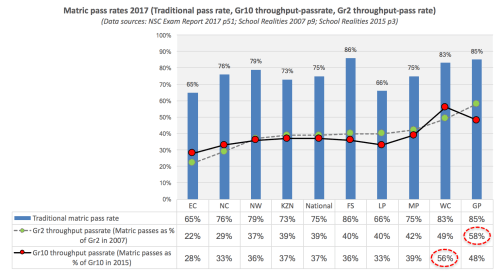

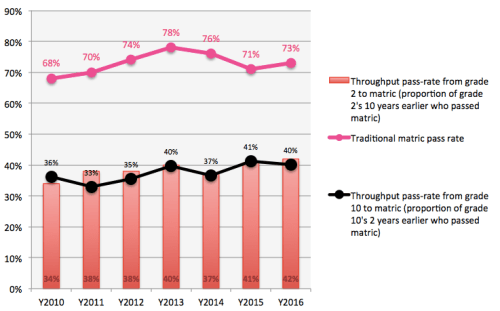

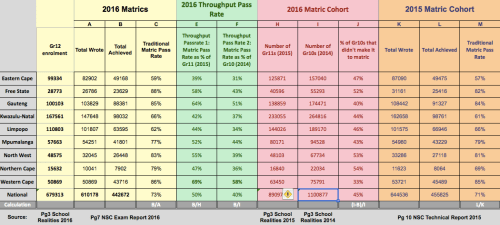

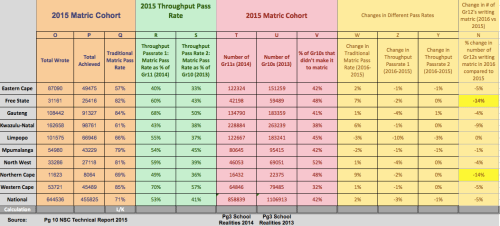

So what is the ‘real’ matric pass-rate. A lot of people have been asking this question, including myself, and it turns out it’s a little more complicated than one thinks. Occasionally we calculate ‘throughput pass rates’ (matric passes divided by grade 10 enrolments 2 years earlier or Grade 2 enrolments 10 years earlier), yielding figures around 39% for the 2017 Grade 10 throughput pass rate. This is the incorrect figure. The problem is that at least 20% of Grade 10 enrolments are actually ‘repeaters’ and not part of the original cohort. There are also the complications of those writing supplementary exams, getting part-time passes, or Independent schools writing the NSC exams. The most authoritative research done on this is by one of my colleagues at Stellenbosch University, Dr Martin Gustafsson, and according to his calculations the ‘true’ matric pass rate is about 55% and the province with the highest achievement is Gauteng. I believe that. While this is not as bad as the crude and incorrect figure of 39%, it is also not as high as the crude and incorrect figure of 75%. If one adds in youth who get some kind of non-matric qualification (i.e. through a technical or vocational college) the figure rises marginally to about 57%. So, 43% of youth in South Africa still get no qualification whatsoever. Not matric, not TVET, not university. Nothing. They enter the labour-market ill-prepared and inherit lives of chronic poverty and sustained unemployment. Is it any wonder that since 2002 more than 40% of 18-24 year olds in South Africa are ‘NEET’; Not-Employed-or-in-Education-or-Training?

So where do the wheels come off in the education system? The answer is long before matric. When children don’t learn to read for meaning in Grades 1-3 they fall further and further behind the curriculum even as they are promoted into higher grades. Last month the 2016 Progress in International Reading and Literacy (PIRLS) results were announced and they showed that 78% of South African Grade 4 children could not read for meaning in any language (all 11 languages were tested). And if children don’t grasp the number concept, place-value or the four operations by the time they hit Grade 4 they are on a one-way ticket to failure. The 2015 Trends in International Mathematics and Science Study (TIMSS) showed that 61% of Grade 5 students could not do basic mathematics.

I know that for those writing and passing matric it is a momentous and important occasion. It’s the culmination of our schooling cycle and (unfortunately) a rite of passage into middle class life (note: necessary but not sufficient for said passage). But as a country I feel like we need to take active steps to de-emphasize the matric results and instead focus on the ailing primary school system, the ugly step-child in our national saga. We don’t even have national assessments in primary school anymore. If the wheels come off by Grade 4 then that’s where we need to be intervening. Prevention is better than cure. A stitch in time saves nine. A matric obsessed country misprioritizes its political and economic resources.

//

The DBE’s 2017 NSC Exam Report can be found here.